Many regulations govern the behaviour of large numbers of individuals (including specific groups such as health professionals) and/or small firms. This note discusses a number of choices that need to be made when deciding how to draft and enforce such regulations.

It is important to remember that - however justified they are - regulations interfere with our freedoms. They should therefore be designed intelligently, and enforced with humanity. Regulations need to include clear boundaries. But it is not necessary to impose heavy penalties on inadvertent and/or minor transgressions. As rugby referee Nigel Owens put it: 'Knowing when to blow the whistle is the easy job of refereeing. The secret is knowing when not to blow it'. (There is an example at the end of this web page.)

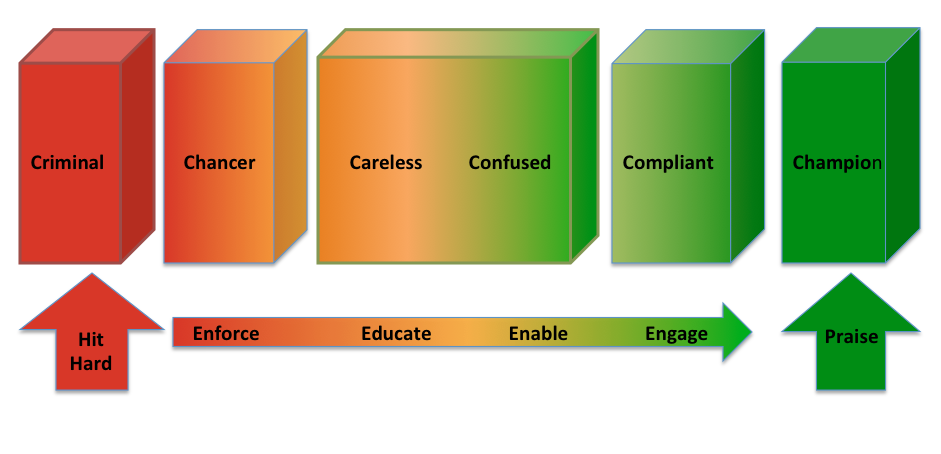

It is certainly quite wrong to assume that everyone is likely to misbehave. It would be equally wrong to assume that there are no rogues amongst the regulated population. The vast majority will wish to comply with regulations, but may find it difficult to do so. The real world range has been beautifully summarised in this diagram prepared by Campbell Gemmell of the Scottish Environment Protection Agency.

The clear lesson is that ‘one size does not fit all’. Most obviously, the careless and confused need to be dealt with rather differently from champions and the compliant – and very differently from chancers and criminals. Appropriate approaches are summarised in the above diagram.

(I have prepared an animated PowerPoint slide which introduces each element of the above diagram, one at a time. Click here to download it and then click Slide Show followed by Play from Start. Each further click will then bring up a new element.)

Professor Christopher Hodges wrote a report (Ethical Business Regulation: Understanding the Evidence) for the Better Regulation Delivery Office which examined the principal research in this area. It was published in early 2016.

Another important lesson is that it is vital to involve the target population in designing and publicising regulations that are intended to change the behaviour of those individuals. There is no point in designing elegant regulations that are likely to be ignored, or easily circumvented, on the ground. Members of the public will readily engage in exercises which are aimed at improving their situation, or which might impact their private or business behaviour. And they will be realistic about what will work and what will not. It is absolutely wrong to take a condescending attitude which dismisses the views of the supposedly uneducated or ill-informed

Small Firms Exemptions?

There is clearly a lot to be said for reducing the burden of regulation by exempting smaller firms from certain regulations. On the other hand … is it a good idea to send the message that it is OK for smaller firms, for instance, to treat their employees less well than larger firms? And is it acceptable for smaller firms to damage the environment, or take greater risks with heath and safety, than their larger competitors? Is there not a risk of creating a somewhat unattractive small firms ghetto?

It may also not be a good idea to tell the owners of small firms that a consequence of growing larger (and/or employing more people) will be that they will suddenly be subject to more intrusive regulation. The owners of British small firms all too often seem less ambitious than their overseas competitors – especially Americans – and all too willing to remain as niche players, or sell out to a bigger company. Why make their growth pains any worse? In general, therefore, it is better to ‘think small first’ when designing regulations. And if small firms will find it hard to understand and/or comply with a regulation, is the regulation really necessary?

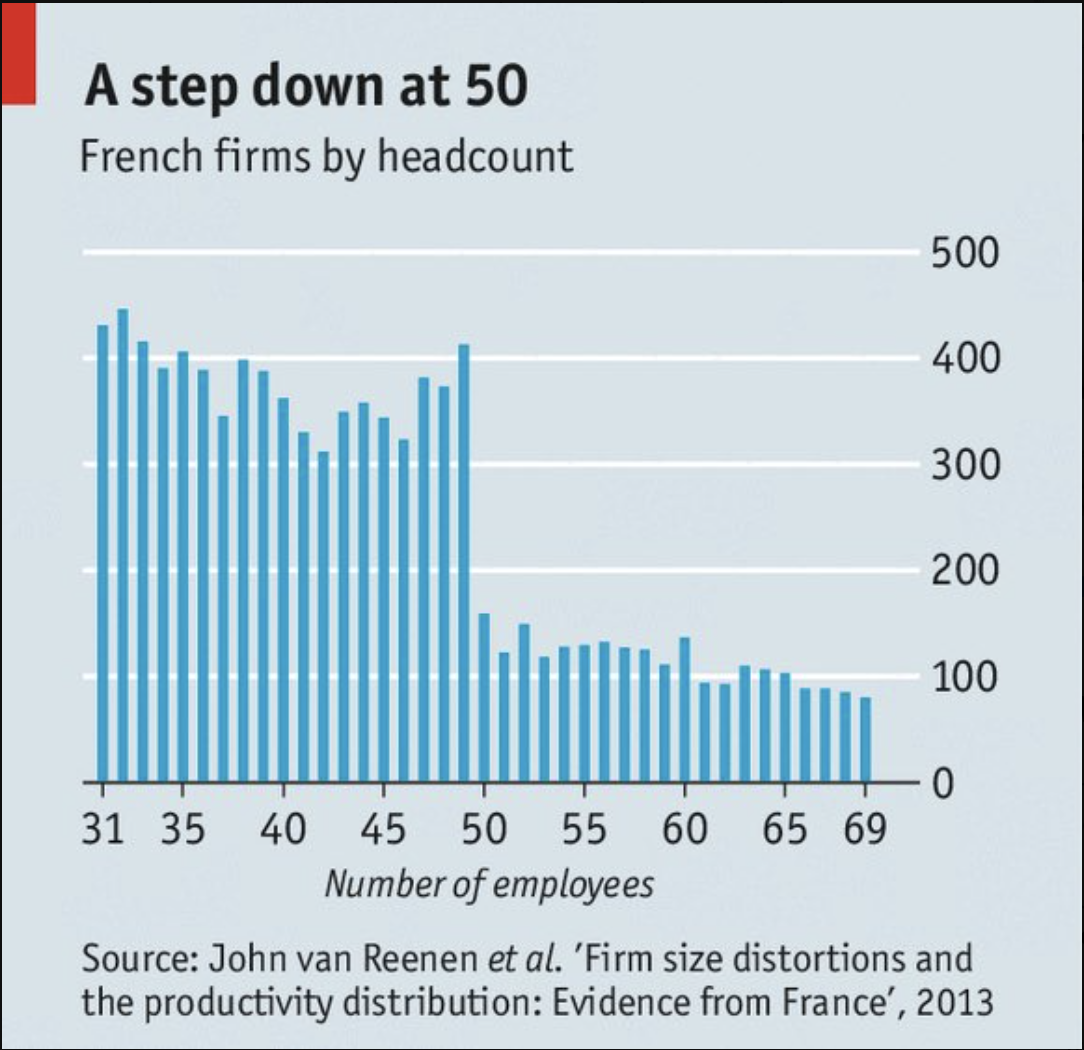

Many French employment regulations apply only to employers with more than 50 employees. This chart suggests than many prefer not to grow above that size.

As of November 2017, the UK small firms VAT exemption was £85,000 - more than five times the comparable German level of £15,600 - and it is seen by many as a significant impediment to growth. It is therefore being frozen at that level whilst the government decides what to do about it.

One-Stop Shops?

There is also clearly much to be said for reducing the number of regulators that interact with smaller firms. But this can be taken too far. Once a firm has got used to a new regulation, their questions tend to be quite specific, and about unusual situations. Such questions need expert answers, so those representing ’one stop shops’ either need to be very well trained and knowledgeable or they need to be very good at networking with regulatory experts. Such staff may need to be paid good salaries, as are their private sector equivalents (lawyers and accountants, for instance). But the public sector is unlikely to be willing to provide such staff. The best approach, therefore, is to ensure that smaller firms are given access to account managers or similar who can deal with a limited range of regulatory issues in which they are reasonably experienced. Such advisers should not, for instance, attempt to be experts in all of tax, employment, and health and safety law and practice.

The Regulatory Sandbox

This was neat innovation by the Financial Conduct Authority. The 'sandbox' is a safe space where young internet firms are allowed to make mistakes without encountering the full force of FCA punishment.

Keep it Simple – or Certain?

Frank Pasquale perceptively summarised regulators' double bind as follows:

- Draft a simple rule >>> "That's far too blunt; we need nuance!"

- Draft a nuanced rule >>> "Oh, the complexity and compliance costs are killing us!"

It is certainly true that smaller firms, and individuals, will never wade through lengthy legislation or guidance. Equally, they like certainty: clear (but therefore detailed and lengthy!) rules to which don’t they can refer in complex or unusual situations. In the UK at least (and unlike elsewhere in Europe where officials are generally given much more discretion) it is probably best to veer on the side of certainty, but ensure that web-based guidance is clear, authoritative and easy to navigate. Read on for some further thoughts on the application of discretion. Also click here for a discussion of the issues associated with the transposition of EU legislation into UK law.

What about the Individualist?

There are of course a number of individuals who hate to be regulated even if - in their quieter moments - they recognise the good intentions that underlie the regulations that so incense them. And it is probably true that such individuals are more likely to be risk takers - including entrepreneurs and adventurers. But it is generally recognised - at least in the UK - that wider society should not seek to protect such people from the consequences of their own behaviour, although it may need to seek to protect others. There are some interesting papers on the regulation of risks to health and safety in the risk section of this website.

I was particularly struck by the 2016 official report into a powerboat accident on the Solent. The boat flipped over at speed - a well known risk - but none of the occupants was wearing their safety harness or helmet. (There was no regulation mandating the use of such equipment, although it would have been compulsory had the boat been racing rather than just practising.) Three of the occupants escaped from the upturned boat but one, the driver’s son, was unconscious inside the cockpit and would have died if the driver had not dived back under the boat and brought his son to the surface, where he was resuscitated. The more astonishing facts were that 'The driver held a commercially endorsed Royal Yachting Association (RYA) Yachtmasters’ certificate of competency. He had over 30 years’ experience as a powerboat driver and had extensive knowledge of the waters of the Solent. [He was also] a former offshore powerboat racing world champion and held 21 powerboat speed and endurance records. He was the former manager of the RYA’s powerboat racing and motor boat department, a post he held for a number of years.' Further comment would probably be superfluous.

Are Algorithms better than Humans?

New Scientist Magazine (7 September 2013) carried an interesting article by Katia Moskvitch about the increasing use of cameras, facial and number-plate recognition software and intelligent computerised analysis to ensure that we comply with road traffic and other regulations. Many of the improvements in the algorithms underlying law enforcement are positive. Speed cameras, for instance, can pick out newcomers to an area and let them off a speeding fine if they are only a little over the speed limit. But these technologies also permit highly rigorous and unforgiving enforcement, facilitating the punishment of accidental transgressions, without any human involvement in the decision-making. For algorithms, all decisions are binary; a big contrast (in the UK at least) from our tradition of having law enforcement moderated by human police officers, jury-members and judges. As Ms Moskvitich notes, 'our society is built on a bit of contrition here, a bit of discretion there'.

The Bill Gates Problem

Reduced toleration of discretion may be leading to greater unwillingness to forgive certain minor criminality and youthful exuberance. Back in the late 1960s, Microsoft founder Bill Gates in effect stole discarded printouts of certain source code which was 'tightly held by the top engineers and off-limits to [Gates]'. He and his friends then 'tried to beat the system by getting hold of an administrator's password, hacking into the internal accounting system file, and breaking the encryption code'. (Note 1) He was caught but wasn't punished, leading one to wonder what would happen to a modern hacker in similar circumstances. Extradited to the USA maybe? I certainly sense that a police officer or regulator would need to be ready to account for any leniency, making the exercise of discretion somewhat less likely.

On the Other Hand ...

... I am also struck by society's failure to punish certain dangerous behaviour if, as luck has it, little damage is caused. An assault will be punished much more severely if it leads to serious injury or to death. Ditto bad driving. Speeding through a red light will be much more severely punished if it causes an accident and injury. There is no logic to this. The person whose behaviour is being punished cannot and does not foresee the exact consequence of their action, and yet is punished as if they had clear foresight.

I confess, however, that I cannot see a practical alternative. But I do urge regulators to apply common sense to their enforcement activity, and be willing to stand up to those who would reduce all enforcement decision making to little more than numerical and box-ticking exercises.

Example

What would you do - if you were a civil servant responsible for improving vehicle and road safety - if you saw this car? The MoT - 'No Pass, No Pay' offer appears to put a lot of pressure on the testing garage to pass dangerous vehicles as road-safe.

What would you do - if you were a civil servant responsible for improving vehicle and road safety - if you saw this car? The MoT - 'No Pass, No Pay' offer appears to put a lot of pressure on the testing garage to pass dangerous vehicles as road-safe.

(*MoTs" are "Ministry of Transport" tests which aim to remove dangerous older vehicles from the road. I have removed the MoT tester's contact details.)

- You could ignore it.

- Or would you mount a formal investigation to see if you could prove that vehicles were passing the test when they shouldn't? You could then maybe prosecute and/or close the business down?

- Or you could warn the company and then monitor its behaviour.

Unless I had information to the contrary, I think I would choose '3' on the basis that the company was most likely simply indulging in OTT advertising. But '2' would be a reasonable choice too.

And '2' should definitely have been chosen when it was noticed that a privatised Building Control organisation (responsible for ensuring the builders follow regulations so that buildings are safe to live in) distributed promotional material saying that 'Plans are never rejected'. It was that sort of attitude that contributed to the Grenfell Tower disaster.

Note 1: These are quotes from Waler Isaacson's The Innovators" - How a Group of Hackers, Geniuses and Geeks Created the Digital Revolution.